Due to the continuous deep learning in the cloud, after a period of time, the game frame rate will also get higher and higher with the algorithm update.

Due to the continuous deep learning in the cloud, after a period of time, the game frame rate will also get higher and higher with the algorithm update.

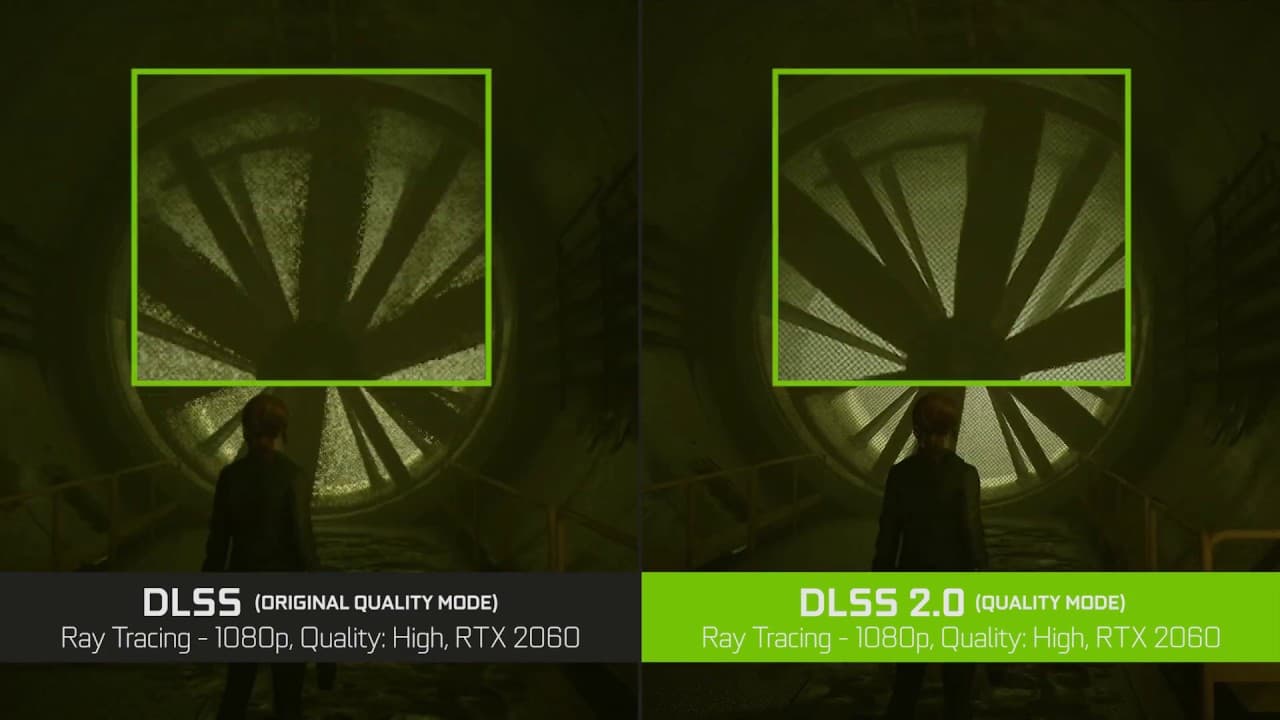

DLSS 2.0 offers three different image quality modes: quality mode, balanced mode, and performance mode.

DLSS 2.0 offers three different image quality modes: quality mode, balanced mode, and performance mode.

They can control the internal rendering resolution of the game, where the performance mode can achieve up to 4x super resolution, that is, in DLSS 2.0-enabled games you choose 4k resolution, but the graphics card will render at 1080p demand and then convert to 4k resolution through the super sampling algorithm, thus achieving 4k output and not affecting the image quality, even as Nvidia says, " DLSS 2.0 tuned picture quality is more fine than the previous native picture quality". So the frame rate can be significantly increased in 4k quality.

They can control the internal rendering resolution of the game, where the performance mode can achieve up to 4x super resolution, that is, in DLSS 2.0-enabled games you choose 4k resolution, but the graphics card will render at 1080p demand and then convert to 4k resolution through the super sampling algorithm, thus achieving 4k output and not affecting the image quality, even as Nvidia says, " DLSS 2.0 tuned picture quality is more fine than the previous native picture quality". So the frame rate can be significantly increased in 4k quality.

Most games today are not output directly to the screen after rendering, but require a series of post-processing. For example, anti-aliasing features, including TAA time anti-aliasing, FXAA fast adaptive anti-aliasing, etc.. However, there are problems with these anti-aliasing or other image optimization features, such as causing blurring, incorrect processing of graphic elements, etc.

Most games today are not output directly to the screen after rendering, but require a series of post-processing. For example, anti-aliasing features, including TAA time anti-aliasing, FXAA fast adaptive anti-aliasing, etc.. However, there are problems with these anti-aliasing or other image optimization features, such as causing blurring, incorrect processing of graphic elements, etc.

For this type of problem, it is impossible to rely solely on algorithms to solve it. This is because it is impossible for the algorithm to know which things are what in the image. For AI, however, this is a very good application. After tens or hundreds of thousands of training sessions with the AI's on the computer, the AI can identify the different picture elements and can automatically complement them to produce high quality graphic results.

For this type of problem, it is impossible to rely solely on algorithms to solve it. This is because it is impossible for the algorithm to know which things are what in the image. For AI, however, this is a very good application. After tens or hundreds of thousands of training sessions with the AI's on the computer, the AI can identify the different picture elements and can automatically complement them to produce high quality graphic results.

This is the basic principle of how DLSS works. According to NVIDIA's data, they first collect the perfect image quality of the game with 64x full-screen anti-aliasing as the reference image, then get the original image obtained by normal rendering, then train DLSS to match the perfect image quality, ask DLSS to produce output by each input, measure the difference between these outputs and the perfect image quality, and adjust the grid weights according to the difference. At this point, DLSS has a stable model for optimizing the image for a given application.

This is the basic principle of how DLSS works. According to NVIDIA's data, they first collect the perfect image quality of the game with 64x full-screen anti-aliasing as the reference image, then get the original image obtained by normal rendering, then train DLSS to match the perfect image quality, ask DLSS to produce output by each input, measure the difference between these outputs and the perfect image quality, and adjust the grid weights according to the difference. At this point, DLSS has a stable model for optimizing the image for a given application.

Share:

What is Ray Tracing?

DP 2.0 Prospect